Check (www.voicingelder.com)

Check VoicingElder article on VCU front page news.

Improving quality of Life for Older Adults with Avatar Life-Review

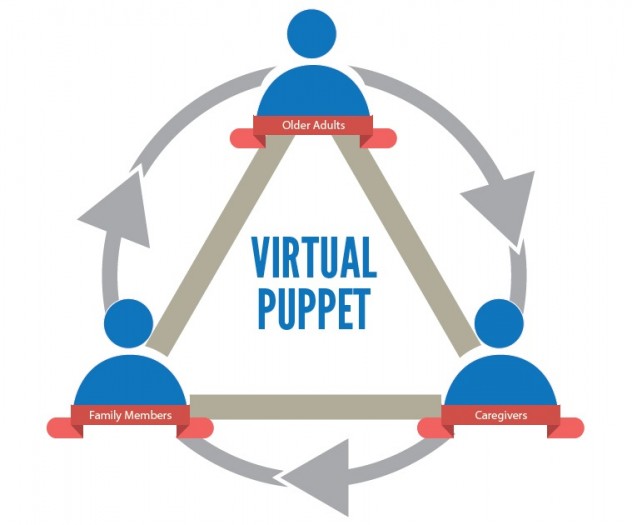

VoicingElder is a reminiscence storytelling platform designed for older adults, using interactive virtual puppets. Storytelling and reminiscence nurture intergenerational sharing and communication, allowing seniors to express and affirm their identities as they review and share their memories. In VoicingElder, the puppeteer (the older adult user) controls an on-screen virtual puppet (avatar) through speech and gesture, enacting digitally embodied therapeutic puppetry.The virtual puppet mimics their gestures (head, hands, eyebrows…), via Microsoft Kinect camera and microphone in real time. Users act out representations of themselves or significant others at various ages (child, teenager, adult, elder), shaping their life history in an engaging way.

The project strives to enhance seniors’ quality of life by engaging with their memories and emotions through virtual puppets. By putting themselves in the position of a puppeteer watching their own puppet performance on screen, they can develop their life story new perspectives, revealing deep memory in “thick” documentation. Those unique life histories will help the older adults’ better communication with their family members (inter-generationally), caregivers and friends.

VocingElder will detect the emotional contents of the older adult’s live speech contents (Natural Language Processing-AI algorithm developed by Dr. Faralli: sad, happy, angry, positive, negative….) and use it to inform the virtual puppet’s facial expressions, behaviors, and background sounds, to facilitate their immersion into affective memory.

This project turns the senior's life review sessions into a playful virtual puppet performance that can be viewed by family members and even subsequent generations in a live or recorded format. VocingElder supports the beauty, wisdom and experiential knowledge of older adults’ oral stories, and promotes cross disciplinary discussions between many areas: art, gerontology, health, technology, psychology, theater, etc.

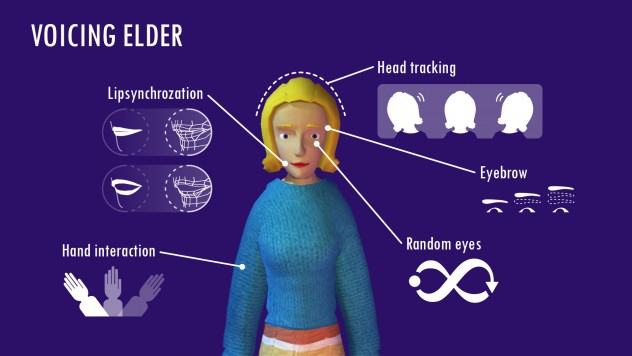

Interactive Features

1. Works with the user's live speech. The user's voice input into the microphone will animate the avatar's lips to produce real-time lip synchronization.

2. Random eye movement to simulate a typical human gaze

3. Real-time head tracking and eyebrow motion. The virtual puppet will follow the user's head rotation, and its eyebrows will change with head posture.

4. Body, Arms interaction. The virtual puppet's body& arms will follow the user's motions.

5. The facial expression of the virtual puppet will reflect the emotional content of the user's speech. (via Dr. Faralli’s Algorithm)

6. Background sound will reflect the emotional content of the user's speech. (via Dr. Faralli’s Algorithm)

7. 4 female and 4 male avatars representing 4 stages of aging: childhood, adolescence, adulthood, old age, and additional avatars for other persons.

8. The user can choose among the virtual puppets, and switch between different representations by speaking approximate time of year or ages during their live speech. (via Dr. Faralli’s Algorithm)